Following the release of GPT-5.5 last week, people noticed something funny about OpenAI’s latest model. In its Codex coding app, the company left a system prompt instructing GPT 5.5 to avoid mention of goblins, gremlins and other creatures. Yes, you read that right. “Never talk about goblins, gremlins, racoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user’s query,” the prompt reads.

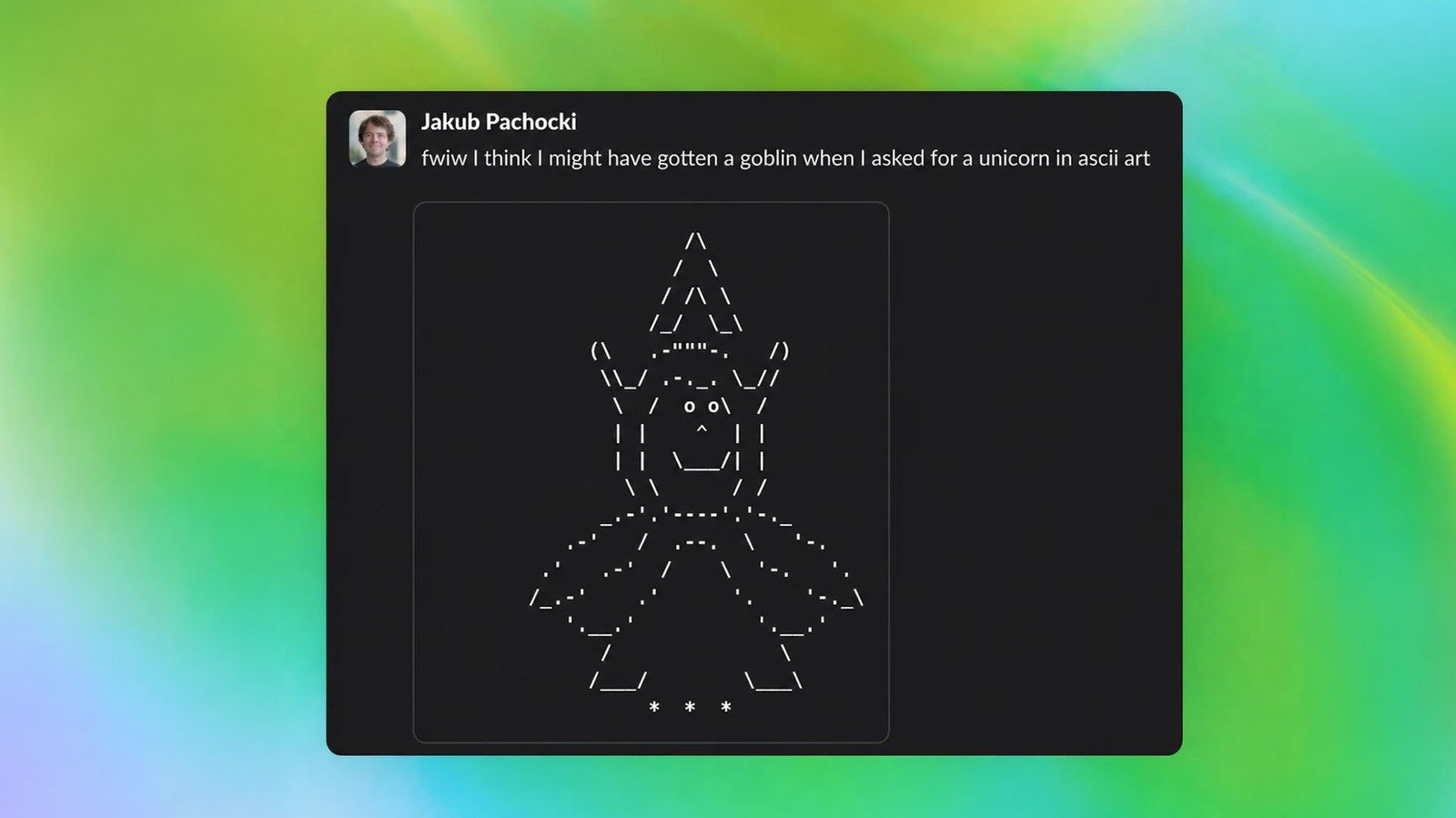

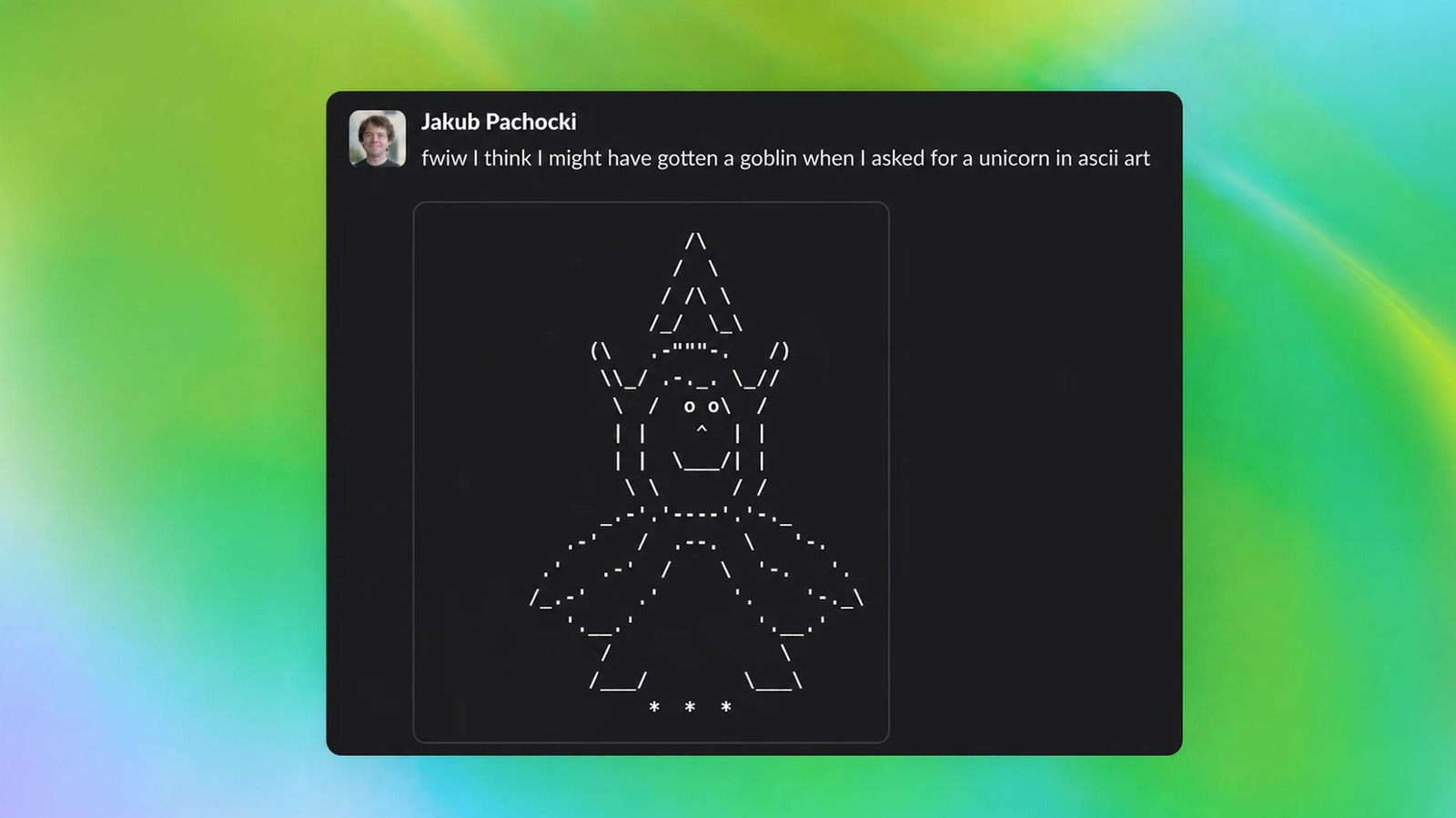

Apparently, enough people started talking about ChatGPT’s creature obsession that OpenAI felt the need to provide an accounting of where the goblins came from. In a blog post published Wednesday, the company explains it began to notice a change in ChatGPT following the release of GPT-5.1 last November. After one safety researcher asked OpenAI to include the words “goblin” and “gremlin” in an investigation into the chatbot’s verbal ticks, the company found ChatGPT’s usage of “goblin” increased by 175 percent after the release of GPT-5.1. Meanwhile, “gremlin” usage had risen by 52 percent over that same period.

This is an actual line that was added to the official system prompt for Codex for GPT-5.5 by OpenAI. Usually the system prompt is as minimal as possible, so I assume it would otherwise mention goblins a lot.

AIs are weird.

— Ethan Mollick (@emollick.bsky.social) 2026-04-28T06:14:22.988Z

“A single ‘little goblin’ in an answer could be harmless, even charming. Across model generations, though, the habit became hard to miss: the goblins kept multiplying, and we needed to figure out where they came from,” OpenAI says. After the release of GPT-5.4, the company (and some users) noticed an even bigger uptick in goblin references. At that point, an investigation was able to pinpoint what OpenAI describes as “the first connection to the root cause.”

For a while now, ChatGPT has included a personality feature that allows users to customize the style and tone of the chatbot’s responses. Prior to March of this year, one option people could select was “nerdy.” Part of the system prompt for that personality read as follows: “The world is complex and strange, and its strangeness must be acknowledged, analyzed, and enjoyed. Tackle weighty subjects without falling into the trap of self-seriousness.”

When OpenAI mapped goblin mentions to different ChatGPT personalities, it found the nerdy personality was disproportionately responsible for using that one word. Despite only accounting for 2.5 percent of all ChatGPT responses, it made 66.7 percent of all goblin mentions generated by the chatbot. Further investigation revealed that reinforcement learning was to blame for the uptick in goblin and gremlin usage. Specifically, OpenAI found that a single reward mechanism was responsible for teaching the nerdy personality to consistently favor creature language.

“Across all datasets in the audit, the Nerdy personality reward showed a clear tendency to score outputs to the same problem with ‘goblin’ or ‘gremlin’ higher than outputs without, with positive uplift in 76.2 percent of datasets,” the company explains.

Subsequently, OpenAI found, due to how reinforcement learning can work, that the nerdy personality’s love of goblins had transferred to other parts of its models. “The rewards were applied only in the Nerdy condition, but reinforcement learning does not guarantee that learned behaviors stay neatly scoped to the condition that produced them,” the company explains. “Once a style tic is rewarded, later training can spread or reinforce it elsewhere, especially if those outputs are reused in supervised fine-tuning or preference data.”

OpenAI began training GPT-5.5 before it identified the cause of ChatGPT’s affinity for goblins, which is why there’s a prompt instructing Codex to avoid creature language. “Codex is, after all, quite nerdy,” OpenAI notes. In hunting down ChatGPT’s goblins, the company notes it has devised new tools to audit and fix model behavior. If it was up to me, I wouldn’t use those tools. Keep AI weird, I say.