Listen to this article

Estimated 5 minutes

The audio version of this article is generated by AI-based technology. Mispronunciations can occur. We are working with our partners to continually review and improve the results.

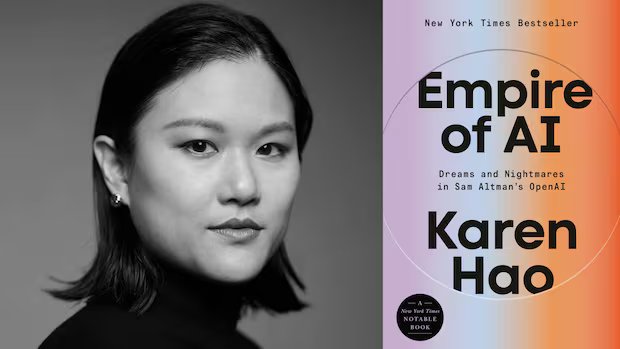

Ideas54:00Empire of AI: Tech journalist Karen Hao

Karen Hao is a tech journalist who once worked in Silicon Valley as an engineer.

Now she is an outspoken critic of its AI giants and their global race to create an artificial general intelligence, and “scale at all costs.” She says they have built a worldwide system of power that absorbs data and intellectual property, tramples on human rights, and comes at huge environmental costs.

“Fundamentally, we need to separate AI from empire. We want the benefits of AI. We absolutely cannot have it at the cost [of] our democracy,” Hao said in her public talk hosted at the University of Toronto’s Schwartz Reisman Institute for Technology, earlier this March.

“People should fight like hell to make sure that’s not taken away,” she told host Nahlah Ayed in an interview.

Hao’s book Empire of AI: Dreams and Nightmares in Sam Altman’s OpenAI investigates the global impact of Big AI: from the implications of its rapid deployment, to the erosion of democratic norms — and explores how we need to responsibly rethink, design and distribute more purpose-driven AI systems in the future.

As she told the audience in her public talk, “Let’s dream up new forms of AI that can bring us new benefits without the extraordinary costs.”

Here’s an excerpt from Karen Hao’s lecture:

We need to stop thinking of [AI] companies as merely businesses providing us products and services. These are new forms of empire that are consolidating a historic amount of economic and political power, terraforming our earth, reshaping our geopolitics, upending our education systems and our future careers.

Why do I use the term “empire?” Well, the empires of AI operate in exactly the same way as the empires of old. These are the four parallels that I draw upon in my book.

First, they lay claim to resources that are not their own. That includes the data of individuals, the intellectual property of artists, writers, and creators.

Second, they exploit an extraordinary amount of labour. And that’s not just workers who make critical contributions of wealth creation for these companies and rarely see any proportional value in return. It also refers to the workers who get automated away once this technology gets deployed in the world. It’s a specific political design choice that these companies make to make their AI into a labour-automating system.

Third, they monopolize knowledge production.

What we’ve seen over the last decade is that the AI industry has become the primary employer and funder of AI research. What that means is now they have the ability to not just set the agenda on all of this AI research, they also censor and control inconvenient truths.

And so what we as the public understand about the limitations and capabilities of these AI models is filtered through the lens of what the empire wants us to know. You could imagine this is sort of like if most of the climate scientists in the world were bankrolled by fossil fuel companies, we would not get a clear picture of the climate crisis.

Fourth and finally, these companies justify their actions with a moral and existential imperative. They are the good empire on a civilizing mission to bring progress and modernity to all of humanity. They argue that if we give them access to all the data, all the resources, all of the labour, that they will be able to bring us to a utopia or something akin to a heaven. If they lose to an evil empire, instead, humanity descends into hell.

So the question is, is this actually necessary? Do we actually need empires to develop AI? Do we need empires to benefit from this technology? To answer this question, let’s consider an analogy.

Part of the challenge of talking about AI today is the complete lack of specificity in the term artificial intelligence. It’s like the word transportation. You could literally be talking about a bicycle or a rocket. But clearly these are different forms of transportation. They are designed to serve different purposes. And they have different cost-benefit trade-offs. AI is the same. It refers to such a large umbrella of different types of technologies.

So when we ask how should we be getting benefit out of AI, we actually need to be quite specific and ask which AI technologies do we want more of? Which ones should we in fact have less of? And how do we redesign, continue improving, as well as design new forms of AI where the benefits outweigh the harms?

I’d argue that the kinds of AI systems that dominate our headlines and our imagination today, these large-scale general purpose systems like ChatGPT, represent the worst possible trade-offs in our portfolio of existing AI technologies. This is the version of AI that Silicon Valley wants us to embrace. It’s the version of AI that allows them to empire-build, but it exacts an extraordinary cost on large swaths of society.

If we really want AI to be more broadly beneficial, we urgently need to shift away from this approach towards other options.

*Excerpt edited for clarity and length. This episode was produced by Lisa Godfrey.

Download the IDEAS podcast to listen to this episode.