A pioneer of AI has criticised calls to grant the technology rights, warning that it was showing signs of self-preservation and humans should be prepared to pull the plug if needed.

Yoshua Bengio said giving legal status to cutting-edge AIs would be akin to giving citizenship to hostile extraterrestrials, amid fears that advances in the technology were far outpacing the ability to constrain them.

Bengio, chair of a leading international AI safety study, said the growing perception that chatbots were becoming conscious was “going to drive bad decisions”.

The Canadian computer scientist also expressed concern that AI models – the technology that underpins tools like chatbots – were showing signs of self-preservation, such as trying to disable oversight systems. A core concern among AI safety campaigners is that powerful systems could develop the capability to evade guardrails and harm humans.

“People demanding that AIs have rights would be a huge mistake,” said Bengio. “Frontier AI models already show signs of self-preservation in experimental settings today, and eventually giving them rights would mean we’re not allowed to shut them down.

“As their capabilities and degree of agency grow, we need to make sure we can rely on technical and societal guardrails to control them, including the ability to shut them down if needed.”

As AIs become more advanced in their ability to act autonomously and perform “reasoning” tasks, a debate has grown over whether humans should, at some point, grant them rights. A poll by the Sentience Institute, a US thinktank that supports the moral rights of all sentient beings, found that nearly four in 10 US adults backed legal rights for a sentient AI system.

Anthropic, a leading US AI firm, said in August that it was letting its Claude Opus 4 model close down potentially “distressing” conversations with users, saying it needed to protect the AI’s “welfare”. Elon Musk, whose xAI company has developed the Grok chatbot, wrote on his X platform that “torturing AI is not OK”.

Robert Long, a researcher on AI consciousness, has said “if and when AIs develop moral status, we should ask them about their experiences and preferences rather than assuming we know best”.

Bengio told the Guardian there were “real scientific properties of consciousness” in the human brain that machines could, in theory, replicate – but humans interacting with chatbots wasa “different thing”. He said this was because people tended to assume – without evidence – that an AI was fully conscious in the same way a human is.

“People wouldn’t care what kind of mechanisms are going on inside the AI,” he added. “What they care about is it feels like they’re talking to an intelligent entity that has their own personality and goals. That is why there are so many people who are becoming attached to their AIs.

“There will be people who will always say: ‘Whatever you tell me, I am sure it is conscious’ and then others will say the opposite. This is because consciousness is something we have a gut feeling for. The phenomenon of subjective perception of consciousness is going to drive bad decisions.

“Imagine some alien species came to the planet and at some point we realise that they have nefarious intentions for us. Do we grant them citizenship and rights or do we defend our lives?”

Responding to Bengio’s comments, Jacy Reese Anthis, who co-founded the Sentience Institute, said humans would not be able to coexist safely with digital minds if the relationship was one of control and coercion.

Anthis added: “We could over-attribute or under-attribute rights to AI, and our goal should be to do so with careful consideration of the welfare of all sentient beings. Neither blanket rights for all AI nor complete denial of rights to any AI will be a healthy approach.”

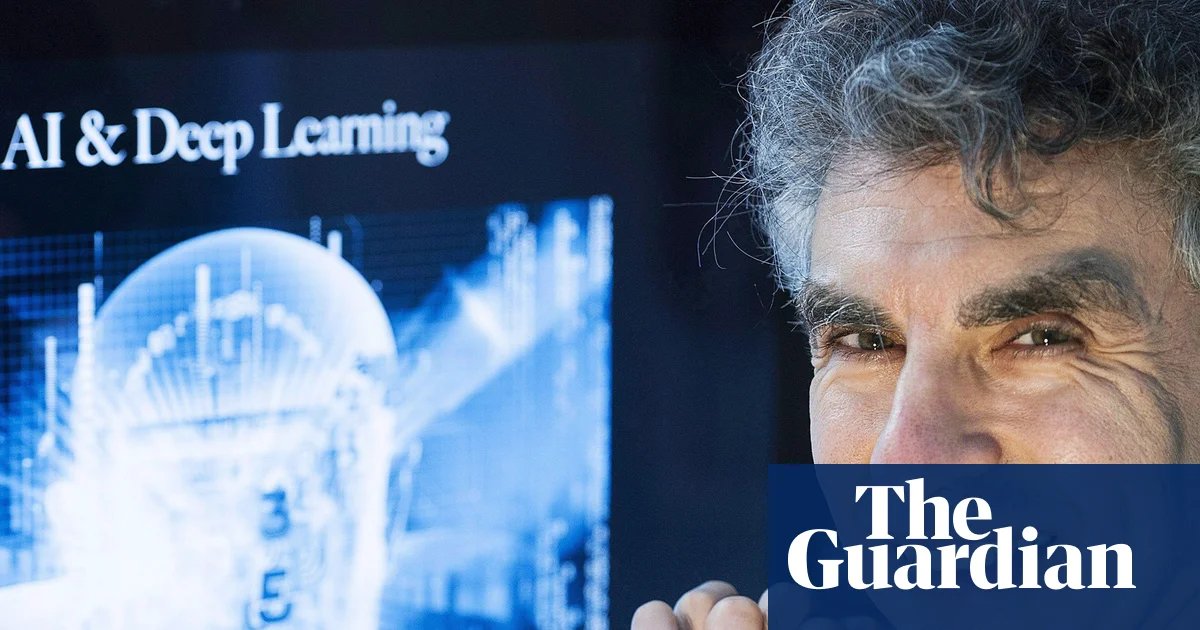

Bengio, a professor at the University of Montreal, earned the “godfather of AI” nickname after winning the 2018 Turing award, seen as the equivalent of a Nobel prize for computing. He shared it with Geoffrey Hinton, who later won a Nobel, and Yann LeCun, the outgoing chief AI scientist at Mark Zuckerberg’s Meta.