![]()

When I was a young lad, in the latter part of the 1950s (not long after the Stone Age ended…), one of my self-taught technical skills was to be able to replace the tubes in our radios and TV set. I was maybe 10 years old when I started doing this. No training, of course: I figured it out on my own, probably from watching my father do it at home, but maybe from something I read in the public library (there were no YouTube videos to teach such skills, of course).

When I was a young lad, in the latter part of the 1950s (not long after the Stone Age ended…), one of my self-taught technical skills was to be able to replace the tubes in our radios and TV set. I was maybe 10 years old when I started doing this. No training, of course: I figured it out on my own, probably from watching my father do it at home, but maybe from something I read in the public library (there were no YouTube videos to teach such skills, of course).

Letting children play with live-voltage electrical devices didn’t seem such a bad parenting idea back then, I suppose. I remember my first experience negative with electricity was when I disassembled the family’s electric alarm clock — when it was still plugged in. I also remember being zapped and frying the inside of the little clock when I poked a screwdriver into the innards. No, I don’t remember if I was punished for doing so, but I suspect sharp words were spoken.

Clearly, I survived the experience and would go on to poke screwdrivers and other tools into electrical appliances later in life, although having learned to unplug them first. Most of the time…

Back then, many electronic appliances and devices ran on vacuum tubes. I had to remove the back of the set, carefully remove the suspect tube, wrap it in a hanky or towel, and walk about a kilometer to a local convenience store where they had a self-serve tube tester. I would read the name and number on the tube, compare it to the chart on the machine, plug the tube into the proper socket, turn a few switches and dials, and see if the tube was good or bad. If bad, I would pull out a drawer below the testing area, select the proper replacement tube by its number, take it to the cashier, and pay for it. Then I’d come home, gingerly place the new tube in the empty socket, and turn on the appliance to make sure nothing else needed changing. If everything worked, I replaced the back of the appliance. if not, I looked to see if another tube appeared defective, and did the whole process all over again.*

Nothing electronic in our home was ‘solid state’ using integrated circuits and processor chips like today. Vacuum tubes dominated: glass tubes that looked very scifi or steampunk; glowing dull red in use and hot to the touch. The transistor — built to replace tubes — had been invented back in 1947, but outside car radios it was slow to arrive in consumer products, The first really popular, mass-produced transistor radio was the Sony TR-63, released in 1957. It helped spark a wave of cheaper, knockoff radios. Although I had used crystal radios before then (which didn’t need a power source), I saved my allowance and paper route money to buy my first two-transistor radio in (as best I can recall) spring or summer of 1962, before I even bought my first Beatles’ album (released in late ’63).**

I also remember opening up my new radio to marvel at these futuristic components soldered inside. The two transistors were easy to spot: they looked like little mushrooms on three wire legs. In high school, a few years later, I joined the radio club and learned to solder capacitors onto circuit breadboards, read resistor codes, and build clumsy circuits that, eventually, turned into an AM-only radio that I could tune to my favourite rock and roll station. Although I did a few modifications to my TRS-80 and some contemporary devices, most of those solder-jockey skills I had are long ago forgotten, but still recall fondly making that radio.

Fast forward to 1977 and the reason I started this post: microcomputer chips (aka integrated circuits). A single chip today can now do all the work that an entire circuit board or even an entire device did in the past. Electronics would never be the same, and tubes have almost vanished from memory for most people (aside from a very few guitar amplifiers, I don’t know of any other commercial use, and even then they seem more an affectation than an irreplaceable function).

Computers, phones, tablets, cars, TVs, stereos, microwave ovens, washing machines, smart and digital watches, dishwashers, calculators, modems, smart speakers, hard drives, DVD players, guitar tuners, garage door openers, game consoles, printers, cameras, CD players, exercycles, medical equipment, traffic lights, airplanes, and even refrigerators and some toasters have one thing in common: they all use microprocessor chips (aka microchips or semiconductors) to operate and control them.

But today by far the biggest demand for CPUs and a wide variety of other chips is from AI and its data centres. And that rampant demand is creating a “chip crisis” where,

A shortage of memory chips is beginning to hammer profits, derail corporate plans and inflate price tags on everything from laptops and smartphones to automobiles and data centers — and the crunch is only going to get worse.

CNN headlined a story this month with, “AI is gobbling up the world’s memory chips, sending smartphone prices to record highs.” Prices for computer memory chips have doubled in this quarter alone compared to 2025. The article noted:

A worsening shortfall of memory components is expected to put phone manufacturers out of business and make smartphones more expensive than ever this year, according to the paper by the International Data Corporation, a Boston-based technology analysis firm.

“What we are witnessing is not a temporary squeeze, but a tsunami-like shock originating in the memory supply chain, with ripple effects spreading across the entire consumer electronics industry,” said Francisco Jeronimo, who leads research on mobile devices at the IDC, in a Thursday report.

As CBC noted, “Just three companies are responsible for manufacturing the world’s supply of RAM: South Korea’s Samsung and SK Hynix, and their U.S. peer Micron Technology” and those firms have “reallocated a lot of their capacity to the newer and more profitable high-bandwidth memory segment,” which is used for AI. The story notes a single NVidia DGX H100 AI server requires 2 TB system memory, the equivalent of 171 iPhones or 17 Pro Max with 128GB of system memory each. This page from 2023 suggests “Pretraining LLM and Generative AI models from scratch typically consumes at least 248 GPUs.” The DGX H100 has 8 GPUs, so those configurations alone require 60 of them. Don’t get me started on the egregious use of energy and water by server farms and data centres… I’ll save that for another post.

Even gaming consoles are being affected, with Nvidia warning that, “A global shortage of gaming chips could last until the end of this year… the console market is expected to see a 4.4% decline this year.” HP and Dell have both raised prices on their computers. Prices for consumer devices like phones have risen and are still skyrocketing due to the chip shortage:

…the average selling price of smartphones will rise 14% this year to an all-time high of $523, while manufacturers will no longer be able to make phones that cost less than $100. The IDC also predicts that 2026 smartphone sales will see a record decline of 12.9% to 1.12 billion units, the lowest level in more than a decade.***

The supply of chips is crucial to every major sector of the global economy (and to every nation’s military because those chips are also inside tanks, radar, planes, ships, drones, missiles…); any shortage affects a wide swatch of industries and businesses, and can hamper growth across entire economies. Yet the number of facilities that produce those chips is small; and of that, the number producing the most powerful and fastest chips is relatively tiny.****

There are fewer than 500 semiconductor fabrication plants (fabs) total operating around the world, and that list includes those that make specific-use chips, low-end controllers, and in-house-only chips. The largest and most well-known of these is TSMC in Taiwan. It is the “largest manufacturer of advanced artificial intelligence (AI) chips, and a key supplier of Nvidia, Apple, Broadcom, and Qualcomm.” Overall, Taiwan companies account for “60% of the global ‘foundry’ market (the outsourcing of semiconductor manufacturing) and the vast majority of that comes from TSMC alone.”

Some chips have a limited control set; others, like those in computers, have powerful processors capable of a wide range of functions and calculations. And inside every chip are transistors. Lots of them. Plus there are resistors and capacitors crammed into the space with the transistors. Those components require wiring, too, to connect them to one another; minuscule copper or aluminum lines. But it is the number of transistors on a chip that has become the benchmark for the industry.

Intel released the first central processing unit, aka CPU, in 1971: the 4004 chip. It was the first, general-purpose, commercial microprocessor available for general use and had 2,250 transistors and other components crammed onto a small 12 mm2 silicon wafer. At the time, it was the most advanced chip on the market and was used in devices as a controller, such as microwave ovens, traffic lights, and even coin-operated games like Bally Alley for several more years.

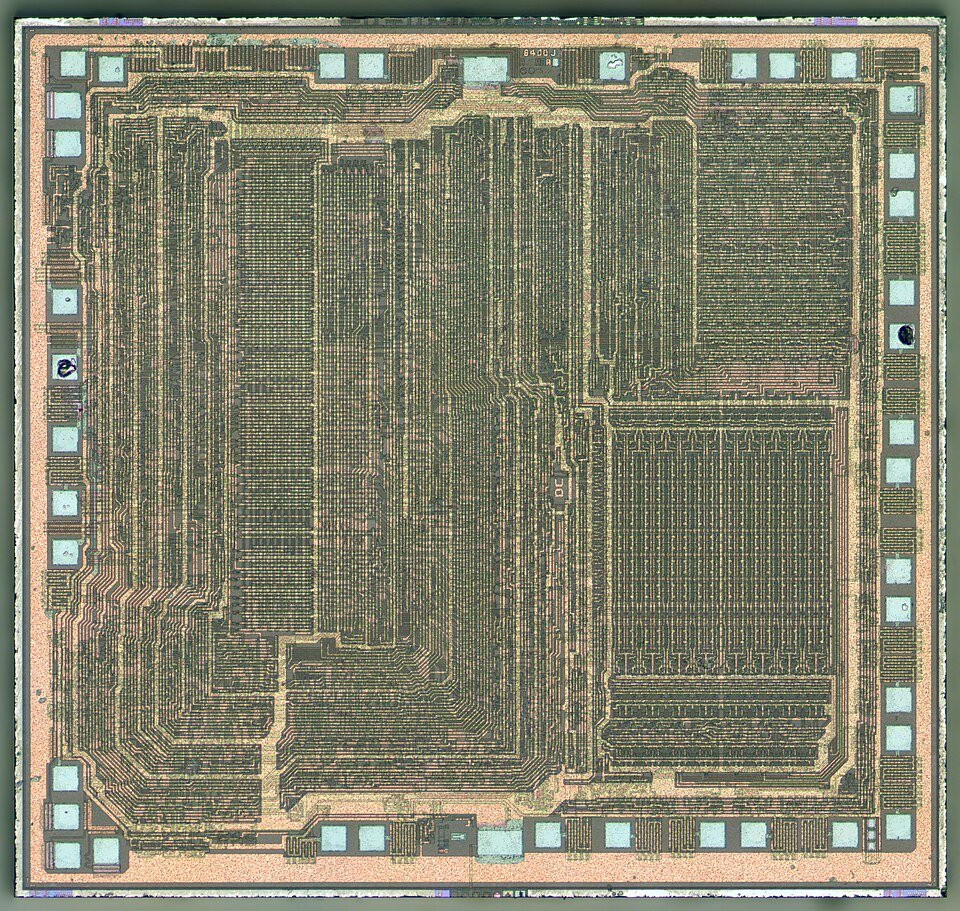

There were two processors used by those early personal computers back in the late ’70s: the TRS-80 and Kaypro used the the Zilog Z80, while competitors like the Commodore Pet, Commodore 64, Atari 400 and 800, and Apple II used the MOS 6502. The Z80 had 8,500 transistors packed onto a single chip; the 6502 had 4,528 (these were both 8-bit microprocessors). That’s a lot to pack on a tiny chip (18 and 21 sq mm, respectively). From those two early CPUs sprang the chips we use daily in pcs and tablets today.

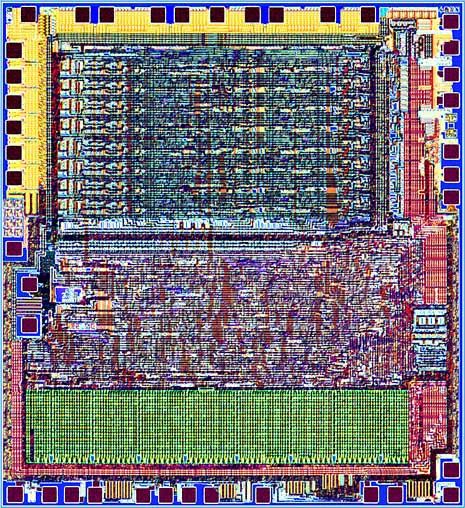

The Intel 8086 and 8088 chips produced in 1978 and ’79 respectively, had 29,000 transistors. The 8088 was the chip chosen for IBM’s first PC, launched in 1981. In ’79 Motorola came out with the 16-bit 68000 chip with — you guessed it — 68,000 transistors! That was the basis of the advanced Atari ST and Commodore Amiga computers, both years ahead of their time. Intel’s 386 processor, the powerhouse for a new generation of PCs, had 275,000. By 1989, chips had reached the one million transistors mark with Intel’s i860. Motorola’s 68040 upped that with 1.2 million in 1990. Intel’s Pentium chip had 3.1 million in ’93 and the Pentium II had 7.5 million by ’98. A year later the first AMD chips had more than 21 million. By 2008, Intel’s i7 had 731 million and AMD’s K10 had 758 million.

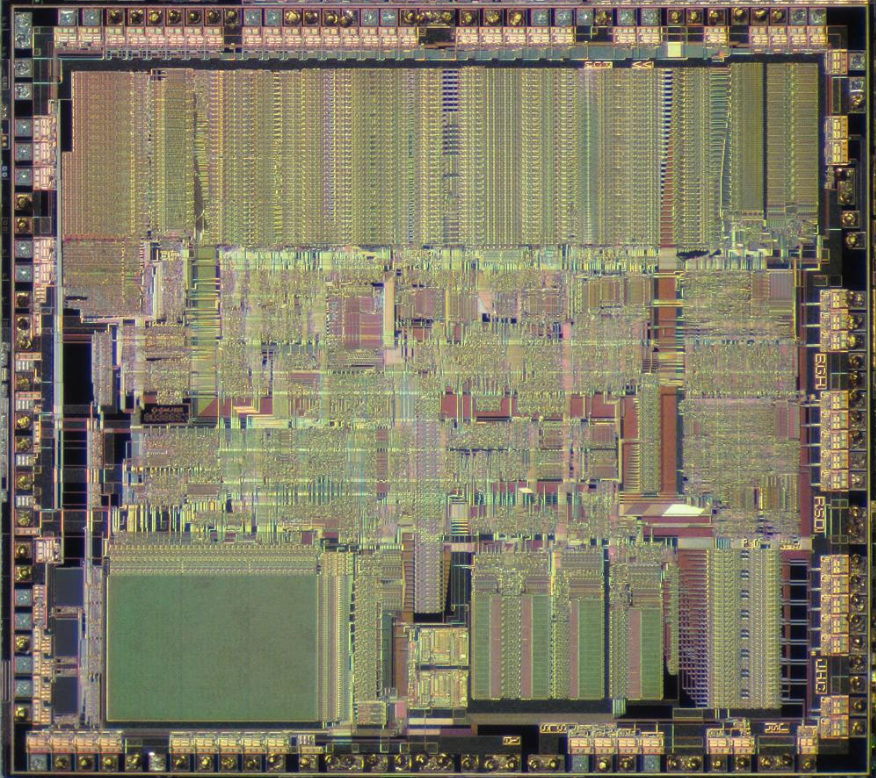

I’m sure you can guess from this that the numbers kept climbing as chips became faster, more powerful, and required more sophisticated machinery to manufacture. By 2023, Apple’s M2 chip line had 40, 67, and 134 billion transistors (Pro, Max, and Ultra versions, respectively). Not to be outdone, AMD’s Instinct had 146 billion that same year. Yes: billion. And trillions may be coming very soon.

The manufacturing process to create a computer chip is an astounding feat of engineering and design. And, like most technology, the process has evolved and improved steadily (and thus the cost of the manufacturing equipment has rising in step). The limits on size, density, and speed that one generation of chip design reached have all been surpassed by the next. And in doing so, these chips were coming close to the physical limits of both the equipment and the materials. That limit has shifted to smaller and smaller components, measured as the “process scale”.*****

Back in ’71 when the 4004 was made, the process scale was 10 micrometers (a micrometre or micron, is “one millionth of a metre or one thousandth of a millimetre (0.001 mm, or about 0.00004 inch).” The symbol for micro is ‘μ’. A nanometre — or nm; the next level smaller — is a billionth of a metre. 10 μm is 10,000 nanometers. To put that in perspective, the average human hair diameter is about 70 μm or 70,000 nm.

The 6502 process was 8,000 nm; the Z80 was down to 4,000 nm; in ’82 the 80286 reached 1,500 nm also used by its successor, the 80386 (the transistor count jumped from 134,000 to 275,000 on a much larger wafer). By 1993 the first Pentium chips were using an 800 nm process. And it kept shrinking. The Pentium 4, brought out in 2006, was at 65 nm. Intel’s first i7 chip in 2008 was at 45 nm but reduced to a mere 14 nm by 2015, the same year Apple released its A9 processor at 14 nm.

Processes fell to single-digit widths in 2018 with Apple’s A12 chip at 7 nm. It also had 10 billion transistors. Apple’s A14, M1, and M2 chips were all manufactured at 5 nm. Apple’s M3 series and A17 reached 3 nm. AMD’s Ryzen line began at 14 nm in 2017 and shrank to 7 nm by 2021. At 7 nm, you could put 10,000 of them side-by-side in a single human hair. But not even 3 nm was not small enough.

2 nm processes were being discussed and planned for back in 2018, but it wasn’t until early 2025 that it was being used in production by TSMC. Their new CPU was being hailed as “the world’s most advanced microchip.” (TSMC itself investing $165 billion USD to upgrade on new fabs). Similarly, Samsung developed its own 2 nm process. 2 nm production requires extreme precision and even custom silicon wafers; so far TSMC has a 60% yield for its own 2nm process, while Samsung is behind with a 30-40% yield. Intel, working on its own 18A process, is still further behind in producing its CPU (“Panther Lake “) and is not expected to reach production levels until late 2026 or 27, and not reach peak supply “until the end of the decade.”

Manufacturers are investing hundreds of billions of dollars to expand production, open new plants, upgrade equipment, and, importantly, move some chip production back into Europe and the USA. An “Annual global IC fabs and facilities report” published in January, 2026, reported “more than 170 notable chip industry fab or facility investments and updates in 2025.” AI and its necessary power-hungry data centres are driving a large part of the demand for advanced processors.

Will AI prove a tech bubble and crash like so many other tech bubbles have? And if so, what will that mean for chip manufacturing?

Notes:

* My Canadian grandfather gave my parents an old radio he had, a large appliance from the 1930s or ’40s. It had way more dials than I had ever seen on a radio, and could get, I believe, shortwave frequencies, too (although not FM because broadcasting FM didn’t begin until after WWII). It had a dusty interior with a lot of very large tubes that when they eventually failed, could not be replaced because no one made them any more. It also had a built-in player on the top for 78 rpm records. I remember sitting in our basement where it was relegated to, listening to the records that came with it, an experience that gave me an appreciation for artists like Rudy Vallee, Mario Lanza, and Stanley Holloway that I still have today.

** Technically, as Wikipedia says, transistors were designed to replace “vacuum-tube triode, also called a (thermionic) valve, which was much larger in size and used significantly more power to operate.” A triode, it further notes, “is an electronic amplifying vacuum tube (or thermionic valve in British English) consisting of three electrodes inside an evacuated glass envelope: a heated filament or cathode, a grid, and a plate (anode)… the triode was the first practical electronic amplifier and the ancestor of other types of vacuum tubes such as the tetrode and pentode. Its invention helped make amplified radio technology and long-distance telephony possible.”

And just to make it more complicated, these are metal–oxide–semiconductor field-effect transistors (aka MOSFET, MOS-FET, MOS FET, or MOS transistor), which Wikipedia reminds us “is a type of field-effect transistor (FET), most commonly fabricated by the controlled oxidation of silicon.”

*** But even if the demand from AI lessens this year, the chip manufacturers will need time to retool back to making consumer products. From CBC:

The memory shortage is expected to last until the end of the year. From Grubb’s perspective, major chip manufacturers have made a high-stakes bet in shifting their production capacity from RAM for consumer electronics to higher-bandwidth memory for AI data centres.

If that bet doesn’t pay off, and the appetite for AI investment vanishes, another memory-related crisis could be on the horizon if companies are forced to reverse their plans, he said.

“Those companies that make that memory would have to shift their focus back over to consumers, [and] begin building new facilities that can handle this different kind of memory,” he said. “And that’s years in the making.”

**** And don’t forget the crucial rare earth metals (REMs) used in component manufacturing. Right now, China controls the world’s largest supply of REMs and has restricted the supply in response to the dictator Trump’s tariffs and his restriction of advanced ships and technology to China. The CSIS website has a piece titled, “China’s New Rare Earth and Magnet Restrictions Threaten U.S. Defense Supply Chains.”

Given China’s dominance in the sector—accounting for roughly 70 percent of rare earth mining, 90 percent of separation and processing, and 93 percent of magnet manufacturing—these developments will have major national security implications.

Rare earths are crucial for various defense technologies, including F-35 fighter jets, Virginia- and Columbia-class submarines, Tomahawk missiles, radar systems, Predator unmanned aerial vehicles, and the Joint Direct Attack Munition series of smart bombs. The United States is already struggling to keep pace in the production of these systems. Meanwhile, China is rapidly scaling up its munitions manufacturing capacity and acquiring advanced weapons platforms and equipment at a rate estimated to be five to six times faster than that of the United States.

The newly announced restrictions represent China’s most consequential measures to date targeting the defense sector. Under the new rules, starting December 1, 2025, companies with any affiliation to foreign militaries—including those of the United States—will be largely denied export licenses. The Ministry of Commerce also made clear that any requests to use rare earths for military purposes will be automatically rejected. In effect, the policy seeks to prevent direct or indirect contributions of Chinese-origin rare earths or related technologies to foreign defense supply chains.

Similarly a story from Reuters this month announced, “Rare earth shortages worsen in US aerospace, chips despite trade truce.”

While Beijing has allowed many rare earth exports to resume since it imposed restrictions in April, shipments of these materials still rarely make it to the U.S. despite the October detente with Washington, Chinese customs data show.

A key pain point is yttrium, used in coatings that keep engines and turbines from melting at high temperatures. Without regular application of these coatings, engines cannot be used.

***** As PCWorld noted, “Traditionally, what we call the “process node” or “process technology” was just the length of the individual transistor gate, the fundamental building block of integrated circuits.” In 2021, Intel officially changed from using nanometres to angstroms (abbreviated to Å) when describing its processes. One angstrom is 0.1 nanometer, so a 7 nm process is in Intel-speak, 70 Å. At the same time, Intel announced it would label process nodes by a new metric: performance per watt. “They will be primarily defined by how much they improve in performance per watt from the prior generation.” The story adds,

…as ExtremeTech noted in a 2019 story, the last time that the gate length matched the process node was way back in 1997. Instead, over time, chipmakers began essentially replacing “actual” gate lengths with “equivalents,” as the ways to compare manufacturing processes became increasingly complex, involving SRAM cell sizes, fin width, minimum metal pitch, and more. None of these factors, however, are ever used in general conversation.

Embedded Computing Design adds

Intel has specified a new process node that it calls “Intel 20A,” which is based upon the critical dimension limits of 20Å. For comparison, a silicon atom measures 1.92Å. That means that in the 20A process, a 20Å layer might contain as few as 11 silicon atoms. It’s clear that Intel’s claim to be working “at the atomic level” is not mere hype or exaggeration – it’s genuinely going to represent an industry advancement.

Words: 3,329